Routing Engine (Enterprise Only)

LangDB's Routing Engine enables organizations to control how user requests are handled by AI models, optimizing for cost, performance, compliance, and user experience. By defining routing rules in JSON, businesses can automate decision-making, ensure reliability, and maximize value from their AI investments.

What is Routing and What are its Components?

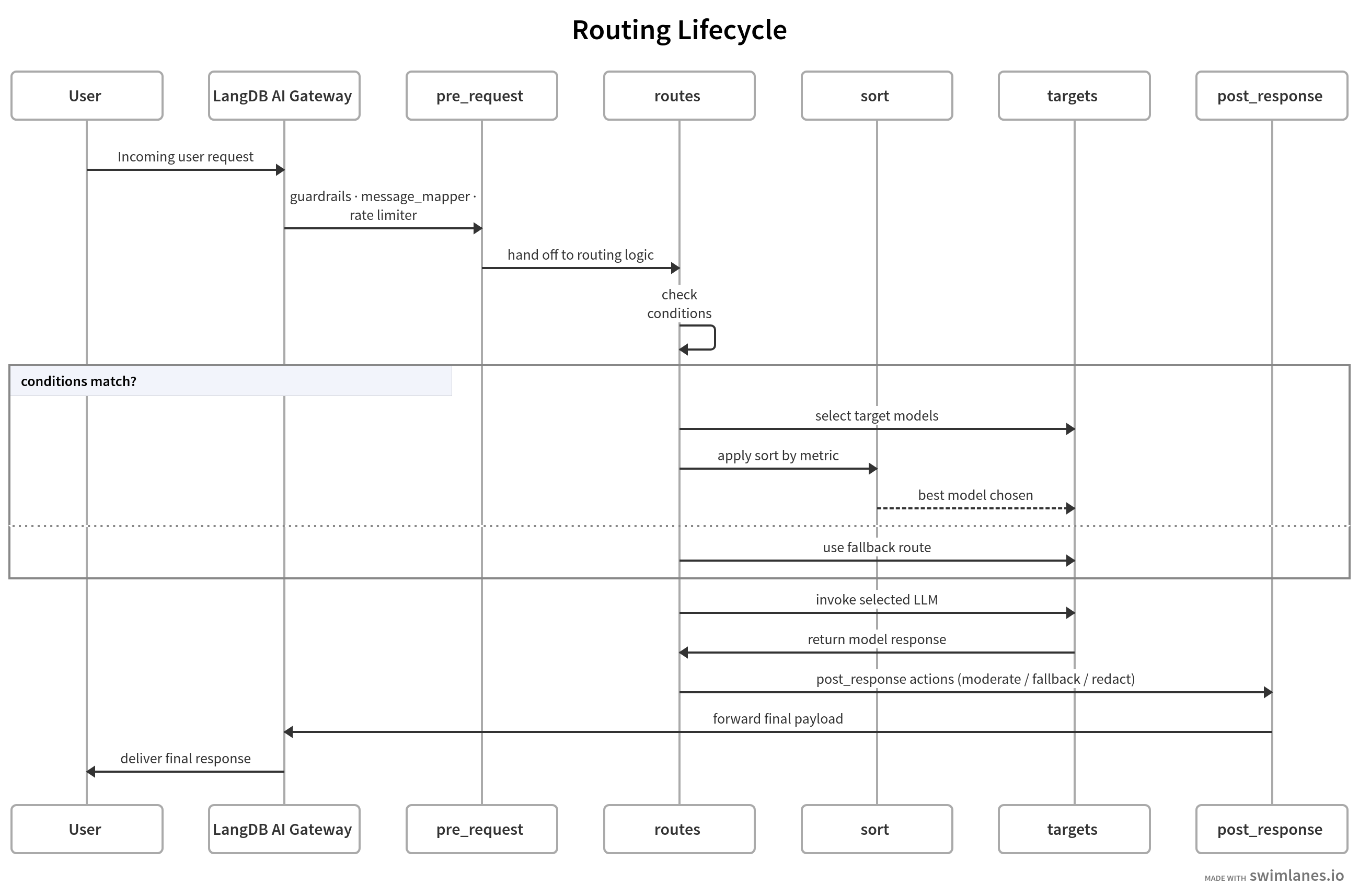

Routing is the process of directing an incoming request to the most appropriate AI model based on a set of rules. The LangDB router is composed of several key components that work together to execute this logic:

- Routes: These are the core building blocks of your router. A router is essentially a list of routes that are evaluated in order. The first route whose conditions are met is executed.

- Conditions: The logic that determines whether a route should be triggered. Conditions can evaluate request data, user metadata, or results from pre-request hooks.

- Targets: The destination for a request if a route's conditions are met. This is typically one or more AI models.

- Interceptors (Guardrails & Rate Limiters): These are pre-request hooks that can inspect, modify, or enrich a request before routing rules are evaluated. Their results can be used in conditions.

- Message Mapper: A component used to block a request or modify the final response, often for handling errors like rate limits.

Example Use Cases

| Enterprise Use Case | Business Goal | Key Variables & Metrics | Routing Logic Summary |

|---|---|---|---|

| SLA-Driven Tiering | Guarantee premium performance for high-value customers. | extra.user.tier, ttft | Route extra.user.tier: "premium" to models with the lowest ttft. |

| Geographic Compliance | Ensure data sovereignty and meet regulatory requirements (e.g., GDPR). | metadata.region, extra.user.tags | If metadata.region: "EU", route to models for users with tags: ["GDPR"]. |

| Intelligent Cost Management | Reduce operational expenses for internal or low-priority tasks. | metadata.group_name, price | If metadata.group_name: "internal", sort available models by "sort_by": "price". |

| Content-Aware Routing | Improve accuracy by using specialized models for specific topics. | pre_request.semantic_guardrail.result.topic | If topic: "finance", route to a finance-tuned model. |

| Brand Safety Enforcement | Prevent brand damage by blocking or redirecting inappropriate content. | pre_request.toxicity_guardrail.result.passed | If passed: false, block the request or route to a safe-reply model. |

For more detailed examples, see the pages below:

Quick, focused routing patterns you can copy and adapt.

End-to-end example of a multi-layer enterprise routing setup with tiering, cost and fallbacks.

Example showing rate limiting, semantic guardrails, GDPR routing, and error handling.

Anatomy of a Routing Request

A routing request is a standard chat completion request with two key additions:

- The

modelmust be set to"router/dynamic". - A

routerobject containing your routing logic must be included in the request body.

Here’s how the various components fit into a complete request. The example below shows a simple configuration with two routes: one for premium users and a fallback for everyone else.

{

"model": "router/dynamic",

"messages": [

{

"role": "user",

"content": "Our production API is down, I need help now!"

}

],

"extra": {

"user": { "tier": "premium" }

},

"router": {

"type": "conditional",

"routes": [

{

"name": "premium_support_fast_track",

"conditions": {

"all": [

{ "extra.user.tier": { "$eq": "premium" } }

]

},

"targets": {

"$any": ["anthropic/claude-4-opus", "openai/gpt-o3"],

"sort_by": "ttft",

"sort_order": "min"

}

},

{

"name": "default_fallback",

"conditions": { "all": [] },

"targets": "openai/gpt-4o-mini"

}

]

}

}

Routing Components Explained

This section breaks down each of the major components of the router object.

Routes

The routes property contains an array of route objects. These are evaluated sequentially from top to bottom, and the first route whose conditions are met will be executed. Every route must have a name, conditions, and targets.

Conditions

The conditions block defines when a route should be activated. It uses a flexible JSON-based syntax.

- Logical Operators: You can combine multiple conditions using

all(AND) orany(OR). - Comparison Operators: Conditions use operators like

$eq(equal),$neq(not equal),$in(in array),$lt(less than),$gt(greater than) to evaluate variables. - Lazy Evaluation of Guardrails: It's important to note that guardrails are evaluated lazily. A guardrail interceptor will only be executed if the router encounters a condition that requires its result (

pre_request.{guardrail_name}.*). This prevents unnecessary latency.

Targets

The targets block defines what happens when a route is matched. It specifies one or more models to which the request can be sent.

Specifying Models

You can specify models in your targets list in several ways, giving you flexibility in how you define your candidate pool.

- Exact Name with Provider:

openai/gpt-4o- This is the most specific and recommended method. It uniquely identifies a single model from a single provider.

- Provider Wildcard:

openai/*- This selects all available models from a specific provider (e.g., all models from OpenAI). This is useful for creating provider-level routing rules or fallbacks.

- Model Name Only:

claude-3-opus- This selects all models with that name from any available provider. For example, if both Anthropic and another provider offered

claude-3-opus, both would be added to the candidate pool. This is particularly useful when you want to use sorting to find the best provider for a specific model based on real-time metrics likepriceorttft. Use with caution, as it can be ambiguous if providers have different capabilities for the same model name.

- This selects all models with that name from any available provider. For example, if both Anthropic and another provider offered

Filtering Models

Before selecting a model, you can filter the list of potential targets in $any using the filter property. This is useful for ensuring models meet certain real-time performance criteria.

"targets": {

"$any": ["anthropic/claude-4-opus", "openai/gpt-o3"],

"filter": {

"error_rate": { "$lt": 0.02 }

}

}

This example ensures that only models with an error rate below 2% are considered.

Sorting Models

After filtering, the router can sort the remaining candidate models to find the best one based on a specific metric.

sort_by: The metric to use for sorting. Common values areprice,ttft(time to first token), anderror_rate.sort_order: The direction to sort, eithermin(for lowest cost/latency) ormax.

"targets": {

"$any": ["mistral/mistral-large-latest", "anthropic/claude-4-sonnet"],

"sort_by": "price",

"sort_order": "min"

}

This example selects the cheapest model from the pool.

Interceptors (Guardrails & Rate Limiters)

Interceptors are hooks that run before the main routing logic is evaluated. They are defined in the pre_request array.

- Guardrails enforce content and safety policies (e.g., checking for toxicity or classifying topics).

- Rate Limiters enforce usage quotas to prevent abuse.

The results of these interceptors are made available in the pre_request variable space for use in your conditions. For detailed configuration, see Interceptors & Guardrails.

Message Mapper

The Message Mapper is used to take direct control of the response. Its most common use case is to block a request that has failed an interceptor check and return a custom error message.

{

"name": "rate_limit_exceeded_block",

"conditions": {

"pre_request.rate_limiter.passed": { "$eq": false }

},

"message_mapper": {

"modifier": "block",

"content": "You have exceeded your daily quota."

}

}

Metadata and Variables

Effective routing relies on rich contextual information. LangDB provides two main sources of data for your conditions: extra.user.* data, which you pass in the request, and metadata.* data, which is automatically populated by LangDB. For a complete list, see Variables & Functions.

Components Summary

| Component | Purpose | Key Configuration |

|---|---|---|

| Routes | A list of rules evaluated in order. | name, conditions, targets |

| Conditions | The "if" statement for a route. | all, any, operators ($eq, $lt), variables |

| Targets | The destination model(s) for a route. | $any, filter, sort_by, sort_order |

| Interceptors | Pre-request hooks for validation or enrichment. | pre_request array, type (guardrail, interceptor) |

| Message Mapper | Blocks or modifies the final response. | modifier: "block", content |

Performance Impact

Different routing components have different performance characteristics.

- Guardrails & Interceptors:

- Simple checks like a Rate Limiter are very fast, typically involving a quick lookup in Redis.

- More complex guardrails, especially those that are "LLM-as-a-judge" (i.e., they make an LLM call to evaluate the prompt), will introduce significant latency to the request, equal to the duration of that LLM call.

- Model Sorting:

- Sorting models based on performance metrics (

ttft,error_rate) orpricerequires fetching this data from a metrics store (like Redis). This is a very fast operation but does add a small, fixed overhead to each routed request.

- Sorting models based on performance metrics (

Tracing & Observability

Routing decisions are fully transparent and traceable within the LangDB ecosystem.

- Routing Performance: The performance of the routing logic itself is tracked, so you can monitor for any overhead.

- Candidate & Picked Models: For every request, the trace records:

- The initial pool of

candidate modelsfor a matched route. - The list of models remaining after

filtering. - The final

picked modelaftersorting.

- The initial pool of

- OpenTelemetry: All router metrics (decisions, latencies, error rates) are exported via OpenTelemetry, allowing you to integrate with your existing observability stack (e.g., DataDog, New Relic) for real-time analytics and alerting.