# Introduction to AI Gateway

Monitor, Govern and Secure your AI traffic.

## **What is an AI Gateway?**

An AI gateway is a middleware that acts as a unified access point to multiple LLMs, optimizing, securing, and managing AI traffic. It simplifies integration with different AI providers while enabling cost control, observability, and performance benchmarking. With an AI gateway, businesses can seamlessly switch between models, monitor usage, and optimize costs.

LangDB provides OpenAI compatible APIs to connect with multiple Large Language Models (LLMs) by just changing two lines of code.

Govern, Secure, and Optimize all of your AI Traffic with Cost Control, Optimisation and Full Observability.

**What AI Gateway Offers Out of the Box**

LangDB provides OpenAI-compatible APIs, enabling developers to connect with multiple LLMs by changing just two lines of code. With LangDB, you can:

* **Provide access to all major LLMs**\

Ensure seamless integration with leading large language models to maximize flexibility and power.

* **No framework code required**\

Enable plug-and-play functionality using any framework like Langchain, Vercel AI SDK, CrewAI, etc., for easy adoption.

* **Plug & Play Tracing & Cost Optimization**\

Simplify implementation of tracing and cost optimization features, ensuring streamlined operations.

* **Automatic routing based on cost, quality, and other variables**\

Dynamically route requests to the most suitable LLM based on predefined parameters.

* **Benchmark and provide insights**\

Deliver insights into the best-performing models for specific tasks, such as coding or reasoning, to enhance decision-making.

**Quick Start with LangDB**

LangDB offers both managed and self hosted versions for organisations to manage AI traffic . Choose between the **Hosted Gateway** for ease of use or the **Open-Source Gateway** for full control.

Quick Start

A full featured and managed AI gateway that provides instant access to 250+ LLMs with enterprise ready features.

#### Roadmap

* **Prompt Caching & Optimization** *(In Progress)*\

Introduce caching mechanisms to optimize prompt usage and reduce redundant costs.

* **GuardRails** *(In Progress)*\

Implement safeguards to enhance reliability and accuracy in AI outputs.

* **Leaderboard of models per category**\

Create a comparative leaderboard to highlight model performance across categories.

* **Ready-to-use evaluations for non-data scientists**\

Provide accessible evaluation tools for users without a data science background.

* **Readily fine-tunable data based on usage**\

Offer pre-configured datasets tailored for fine-tuning, enabling customized improvements with ease.

# Quick Start

Quick Start guide for LangDB AI Gateway

The **LangDB** **AI Gateway** allows you to connect with multiple Large Language Models (LLMs) instantly, without any setup.

{% stepper %}

{% step %}

### Account Creation

Sign up on [LangDB](https://app.langdb.ai/) to start using the Hosted Gateway

{% endstep %}

{% step %}

### Make your First Request

Test a chat window with two different models to see dynamic routing in action.

{% endstep %}

{% step %}

### Checkout Samples section for Template Code

Use ready-made templates to integrate LangDB into your project effortlessly.

{% endstep %}

{% step %}

### Analytics Section

Monitor usage, costs, and performance insights through the LangDB analytics dashboard.

{% endstep %}

{% endstepper %}

# Working with API

LangDB provides access to 350+ LLMs with OpenAI compatible APIs.

You can use LangDB as a drop-in replacement for OpenAI APIs, making it easy to integrate into existing workflows and libraries such as OpenAI Client SDK.

You can choose from any of the [supported models](https://app.langdb.ai/models).

{% tabs %}

{% tab title="Python" %}

```python

from openai import OpenAI

langdb_project_id = "xxxxx" # LangDB Project ID

client = OpenAI(

base_url=f"https://api.us-east-1.langdb.ai/{langdb_project_id}/v1",

api_key="xxxxx" , # LangDB token

)

response = client.chat.completions.create(

model="anthropic/claude-sonnet-4", # Change Model

messages=[

{"role": "system", "content": "You are a helpful assistant"},

{"role": "user", "content": "What are the earnings of Apple in 2022?"},

],

)

print("Assistant:", response.choices[0].message)

```

{% endtab %}

{% tab title="TypeScript" %}

```typescript

import { OpenAI } from 'openai';

const langdbProjectId = 'xxxx'; // LangDB Project ID

const client = new OpenAI({

baseURL: `https://api.us-east-1.langdb.ai/${langdbProjectId}/v1`,

apiKey: 'xxxx' // Your LangDB token,

});

const messages = [

{

role: 'system',

content: 'You are a helpful assistant.'

},

{

role: 'user',

content: 'What are the earnings of Apple in 2022?'

}

];

async function getAssistantReply() {

const { choices } = await client.chat.completions.create({

model: 'gpt-4o-mini',

messages: messages

});

console.log('Assistant:', choices[0].message.content);

}

getAssistantReply();

```

{% endtab %}

{% tab title="cURL" %}

```bash

curl "https://api.us-east-1.langdb.ai/v1/chat/completions" \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $LANGDB_API_KEY" \

-X "X-Project-Id: $Project_ID" \

-d '{

"model": "gpt-4o",

"messages": [

{

"role": "user",

"content": "Write a haiku about recursion in programming."

}

],

"temperature": 0.8

}'

```

{% endtab %}

{% endtabs %}

After sending your request, you can see the Traces on the dashboard:

[Check out the API reference here.](https://docs.langdb.ai/docs/api-reference)

# Working with Multiple Agents

Learn how to use LangDB to Trace Multi Agent workflows

LangDB automatically visualizes how agents interact, providing a clear view of workflows, hierarchies, and usage patterns by adding [`run`](https://docs.langdb.ai/ai-gateway/concepts/run) and [`thread`](https://docs.langdb.ai/ai-gateway/concepts/thread) headers.

This allows developers to track interactions between agents seamlessly, ensuring clear visibility into workflows and dependencies.

## What is a Multi-Agent System?

A multi-agent system consists of independent agents collaborating to solve complex tasks. Agents handle various roles such as user interaction, data processing, and workflow orchestration. LangDB streamlines tracking these interactions for better efficiency and transparency.

## Why Track Workflows?

Tracking ensures:

* **Clear Execution Flow**: Understand how agents interact.

* **Performance Optimization**: Identify bottlenecks.

* **Reliability & Accountability**: Improve transparency.

LangDB supports two main concepts.

* [**Run**](https://docs.langdb.ai/docs/concepts/run): A complete end-to-end interaction between agents, grouped for easy tracking.

* [**Thread**](https://docs.langdb.ai/docs/concepts/thread): Aggregate multiple Runs into a single thread for a unified chat experience.

Example

Using the same **Run ID** and **Thread ID** across multiple agents ensures seamless tracking, maintaining context across interactions and providing a complete view of the workflow

```python

from openai import OpenAI

from uuid import uuid4

client = OpenAI(

base_url="https://api.us-east-1.langdb.ai/{langdb_project_id}/v1" # LangDB API base URL,

api_key=api_key, # Replace with your LangDB token

)

response1 = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "developer", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hello!"}],

extra_headers={"x-thread-id": thread_id, "x-run-id": run_id}

)

# Agent 2 processes the response

response2 = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "developer", "content": "Processing user input."},

{"role": "user", "content": response1.choices[0].message["content"]}],

extra_headers={"x-thread-id": thread_id, "x-run-id": run_id}

)

```

Checkout the full Multi-Agent Tracing Example [here](https://github.com/langdb/langdb-samples/blob/main/examples/langchain/langchain-multi-agent/langchain-multi-agent.ipynb).

# Working with Agent Frameworks

Enable end-to-end tracing for AI agent frameworks with LangDB’s one-line init() integration.

LangDB integrates seamlessly with a variety of agent libraries to provide out-of-the-box tracing, observability, and cost insights. By simply initializing the LangDB client adapter for your agent framework, LangDB **monkey‑patches** the underlying client to inject tracing hooks—no further code changes required.

### Prerequisites

* **LangDB Core** installed:

```

pip install 'pylangdb'

```

* **Optional feature flags** (for framework-specific tracing):

```

pip install 'pylangdb[]'

# e.g. pylangdb[adk], pylangdb[openai_agents]

```

* **Environment Variables** set:

```bash

export LANGDB_API_KEY="xxxxx"

export LANGDB_PROJECT_ID="xxxxx"

```

## Quick Start

Import and initialize once, before creating or running any agents:

```python

from pylangdb. import init

# Monkey‑patch the client for tracing

init()

# ...then your existing agent setup...

```

> **Monkey‑patching note:** The `init()` call wraps key client methods at runtime to capture telemetry. Ensure it runs as early as possible.

**GitHub Repo:**

### Example: Google ADK

```bash

pip install 'pylangdb[adk]'

```

```python

from pylangdb.adk import init

init()

from google.adk.agents import Agent

# (rest of your Google ADK agent code)

```

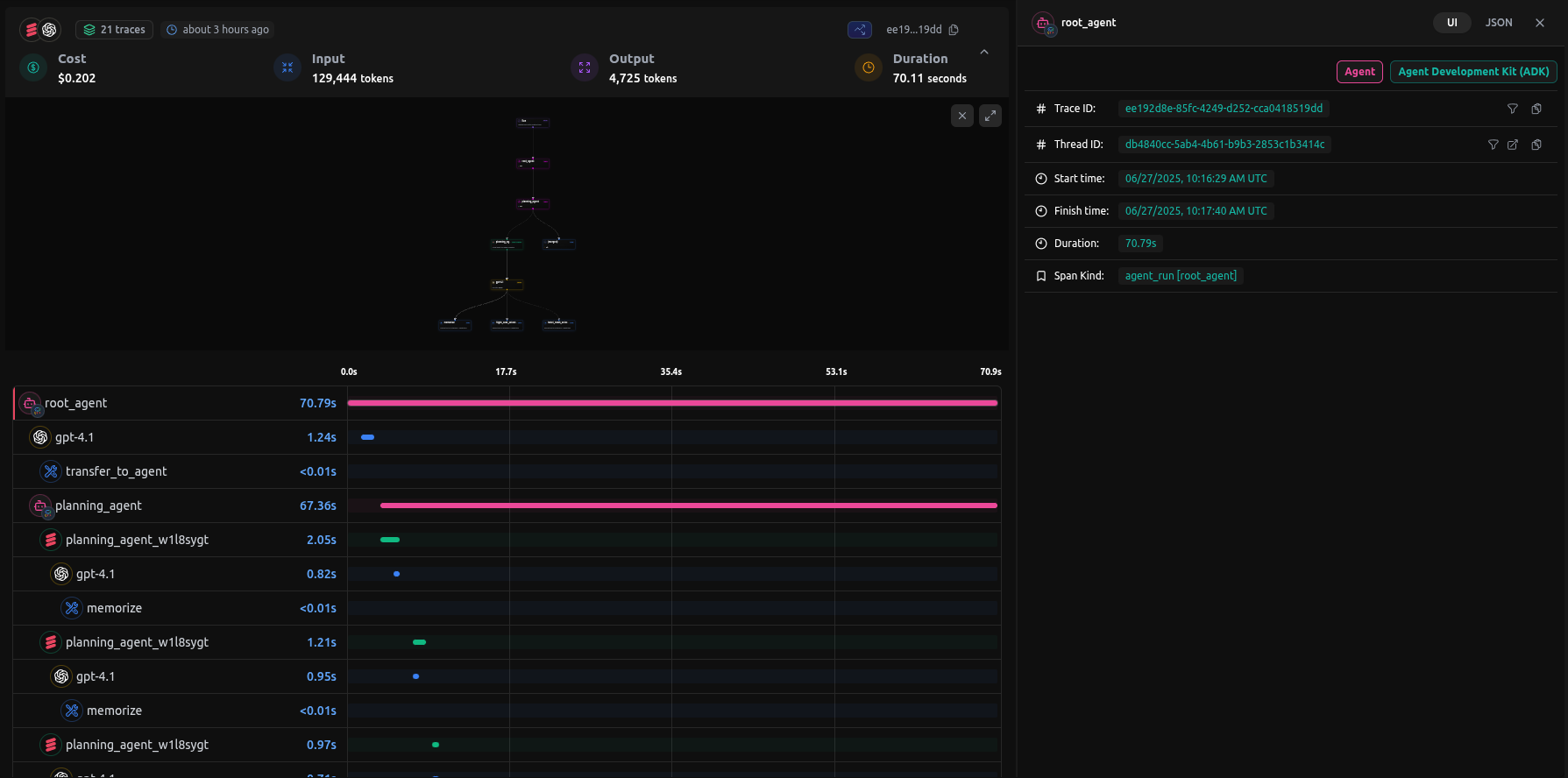

This is an example of complete end-to-end trace using Google ADK and LangDB.

LangDB’s ADK adapter captures request/response metadata, token usage, and latenc metrics automatically. During initialization it discovers and wraps all agents and sub‑agents in subfolders, linking their sessions for full end‑to‑end tracing across your workflow.

## Supported Frameworks

## Further Documentation

For full documentation including client capabilities, configuration, and detailed examples, checkout [Python SDK documentation](https://docs.langdb.ai/docs/python-sdk/getting-started) and [Github](https://github.com/langdb/pylangdb).

# Working with Google ADK

Instrument Google ADK pipelines with LangDB—capture nested agent flows, token usage, and latency metrics using a single init() call.

LangDB’s Google ADK integration provides end-to-end tracing for your ADK agent pipelines.

## Installation

Enable end-to-end tracing for your Google ADK agents by installing the `pylangdb` client with the ADK feature flag:

```bash

pip install 'pylangdb[adk]'

```

## Quick Start

Set your environment variables **before** initializing running the script:

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

```

Initialize LangDB **before** creating or running any ADK agents:

```python

from pylangdb.adk import init

# Initialise LangDB

init()

# Then proceed with your normal ADK setup:

from google.adk.agents import Agent

# ...define and run agents...

```

Once initialized, LangDB automatically discovers all agents and sub-agents (including nested folders), wraps their key methods at runtime, and links sessions for full end-to-end tracing across your workflow as well.

## Complete Google ADK Python Example

Here's a full example of a Google ADK agent implementation that you can instrument with LangDB. This sample is based on the official [Google ADK Quickstart](https://google.github.io/adk-docs/get-started/quickstart/).

### Example code

> Check out the full sample on GitHub:

### Setup Environment

```bash

pip install google-adk litellm 'pylangdb[adk]'

```

### Project Structure

Create the following project structure:

```

parent_folder/

└── multi_tool_agent/

├── __init__.py

├── agent.py

└── .env

```

### **init**.py

Create an `__init__.py` file in the `multi_tool_agent` folder:

```python

from . import agent

```

### .env

Create `.env` file for your secrets

```bash

LANGDB_API_KEY=""

LANGDB_PROJECT_ID=""

```

### agent.py

Create an `agent.py` file with the following code:

```python

# First initialize LangDB before defining any agents

from pylangdb.adk import init

init()

import datetime

from zoneinfo import ZoneInfo

from google.adk.agents import Agent

def get_weather(city: str) -> dict:

if city.lower() != "new york":

return {"status": "error", "error_message": f"Weather information for '{city}' is not available."}

return {"status": "success", "report": "The weather in New York is sunny with a temperature of 25 degrees Celsius (77 degrees Fahrenheit)."}

def get_current_time(city: str) -> dict:

if city.lower() != "new york":

return {"status": "error", "error_message": f"Sorry, I don't have timezone information for {city}."}

tz = ZoneInfo("America/New_York")

now = datetime.datetime.now(tz)

return {"status": "success", "report": f'The current time in {city} is {now.strftime("%Y-%m-%d %H:%M:%S %Z%z")}'}

root_agent = Agent(

name="weather_time_agent",

model="gemini-2.0-flash",

description=("Agent to answer questions about the time and weather in a city." ),

instruction=("You are a helpful agent who can answer user questions about the time and weather in a city."),

tools=[get_weather, get_current_time],

)

```

### Running Your Agent

Navigate to the parent directory of your agent project and use the following commands:

```bash

adk web

```

Open the URL provided (usually ) in your browser and select "multi\_tool\_agent" from the dropdown menu.

Once your agent is running, try these example queries to test its functionality:

```

Whats the weather in New York?

```

These queries will trigger the agent to use the functions we defined and provide responses based on the our agent workflow.

### Traces on LangDB

When you run queries against your ADK agent, LangDB automatically captures detailed traces of all agent interactions:

## Next Steps: Advanced Google ADK Integration

This guide covered the basics of integrating LangDB with Google ADK using a simple weather and time agent example. For more complex scenarios and advanced use cases, check out our comprehensive resources in [Guides Section](https://app.gitbook.com/s/jwXvc8GvLP6kUvOASaLP/).

# Working with OpenAI Agents SDK

Trace OpenAI Agents SDK workflows end-to-end with LangDB—monitor model calls, tool invocations, and runner sessions via one-line init().

LangDB helps you add full tracing and observability to your OpenAI Agents SDK workflows—without changing your core logic. With a one-line initialization, LangDB captures model calls, tool invocations, and intermediate steps, giving you a complete view of how your agent operates.

## Installation

Enable end-to-end tracing for your OpenAI Agents SDK agents by installing the `pylangdb` client with the `openai` feature flag:

```bash

pip install 'pylangdb[openai]'

```

## Quick Start

### Export Environment Variables

Set your LangDB credentials:

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

```

### Initialize Tracing

Import and run the initialize **before** configuring your OpenAI client:

```python

from pylangdb.openai import init

# Initialise LangDB

init()

```

### Configure OpenAI Client and Agent Runner

```python

# Agent SDK imports

from agents import (

Agent,

Runner,

set_default_openai_client,

RunConfig,

ModelProvider,

Model,

OpenAIChatCompletionsModel

)

from openai import AsyncOpenAI

# Configure the OpenAI client with LangDB headers

client = AsyncOpenAI(

api_key=os.environ["LANGDB_API_KEY"],

base_url=os.environ["LANGDB_API_BASE_URL"],

default_headers={"x-project-id": os.environ["LANGDB_PROJECT_ID"]}

)

set_default_openai_client(client)

# Create a custom model provider for advanced routing

class CustomModelProvider(ModelProvider):

def get_model(self, model_name: str | None) -> Model:

return OpenAIChatCompletionsModel(model=model_name, openai_client=client)

agent = Agent(

name="Math Tutor",

instructions="You are a helpful assistant",

model="openai/gpt-4.1", # Choose any model from avaialable model on LangDB

)

# Register your custom model provider to route model calls through LangDB

CUSTOM_MODEL_PROVIDER = CustomModelProvider()

# Assign a unique group_id to link all steps in this session trace

group_id = str(uuid.uuid4())

response = await Runner.run(

agent,

input="Hello, world!",

run_config=RunConfig(

model_provider=CUSTOM_MODEL_PROVIDER, # Inject custom model provider

group_id=group_id # Link all steps to the same trace

)

)

```

Once executed, LangDB links all steps—model calls, intermediate tool usage, and runner orchestration—into a single session trace.

## Complete OpenAI Agents SDK Example

Here is a full example based on [OpenAI Agents SDK Quickstart](https://openai.github.io/openai-agents-python/quickstart/) which uses LangDB Tracing.

### Example code

> Check out the full sample on GitHub:

### Setup Environment

```

pip install openai-agents 'pylangdb[openai]'

```

### Export Environment Variables

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

```

### main.py

```python

# Initialize LangDB tracing

from pylangdb.openai import init

init()

# Agent SDK imports

from agents import (

Agent,

Runner,

set_default_openai_client,

set_default_openai_key,

set_default_openai_api,

RunConfig,

ModelProvider,

Model,

OpenAIChatCompletionsModel

)

from openai import AsyncOpenAI

import os

import uuid

import asyncio

# Configure the OpenAI client with LangDB headers

client = AsyncOpenAI(api_key=os.environ["LANGDB_API_KEY"],

base_url=os.environ["LANGDB_API_BASE_URL"],

default_headers={"x-project-id": os.environ["LANGDB_PROJECT_ID"]})

# Set the configured client as default with tracing enabled

set_default_openai_client(client, use_for_tracing=True)

set_default_openai_api(api="chat_completions")

# set_default_openai_key(os.environ["LANGDB_API_KEY"])

# Create a custom model provider for advanced routing

class CustomModelProvider(ModelProvider):

def get_model(self, model_name: str | None) -> Model:

return OpenAIChatCompletionsModel(model=model_name, openai_client=client)

# Register your custom model provider to route model calls through LangDB

CUSTOM_MODEL_PROVIDER = CustomModelProvider()

math_tutor_agent = Agent(

name="Math Tutor",

handoff_description="Specialist agent for math questions",

instructions="You provide help with math problems. Explain your reasoning at each step and include examples",

model="anthropic/claude-3.7-sonnet"

)

history_tutor_agent = Agent(

name="History Tutor",

handoff_description="Specialist agent for historical questions",

instructions="You provide assistance with historical queries. Explain important events and context clearly.",

model="gemini/gemini-2.0-flash" # Choose any model available on LangDB

)

triage_agent = Agent(

name="Triage Agent",

instructions="You determine which agent to use based on the user's homework question",

handoffs=[history_tutor_agent, math_tutor_agent],

model="openai/gpt-4o-mini" # Choose any model available on LangDB

)

# Assign a unique group_id to link all steps in this session trace

group_id = str(uuid.uuid4())

# Define async function to run the agent

async def run_agent():

response = await Runner.run(

triage_agent,

input="who was the first president of the united states?",

run_config=RunConfig(

model_provider=CUSTOM_MODEL_PROVIDER, # Inject custom model provider

group_id=group_id # Link all steps to the same trace

)

)

print(response.final_output)

# Run the async function with asyncio

asyncio.run(run_agent())

```

### Running Your Agent

Navigate to the parent directory of your agent project and use one of the following commands:

```bash

python main.py

```

#### Output:

```

The first president of the United States was **George Washington**.

Here's some important context:

* **The American Revolution (1775-1783):** Washington was the commander-in-chief of the Continental Army during the Revolutionary War. His leadership was crucial in securing American independence from Great Britain.

* **The Articles of Confederation (1781-1789):** After the war, the United States was governed by the Articles of Confederation. This system proved to be weak and ineffective, leading to calls for a stronger national government.

* **The Constitutional Convention (1787):** Delegates from the states met in Philadelphia to revise the Articles of Confederation. Instead, they drafted a new Constitution that created a more powerful federal government. Washington presided over the convention, lending his prestige and influence to the process.

* **The Constitution and the Presidency:** The Constitution established the office of the President of the United States.

* **Election of 1789:** George Washington was unanimously elected as the first president by the Electoral College in 1789. There were no opposing candidates. This reflected the immense respect and trust the nation had in him.

* **First Term (1789-1793):** Washington established many precedents for the presidency, including the formation of a cabinet, the practice of delivering an annual address to Congress, and the idea of serving only two terms. He focused on establishing a stable national government, paying off the national debt, and maintaining neutrality in foreign affairs.

* **Second Term (1793-1797):** Washington faced challenges such as the Whiskey Rebellion and growing partisan divisions. He decided to retire after two terms, setting another crucial precedent for peaceful transitions of power.

* **Significance:** Washington's leadership and integrity were essential in establishing the legitimacy and credibility of the new government. He is often considered the "Father of His Country" for his pivotal role in the founding of the United States.

```

### Traces on LangDB

When you run queries against your agent, LangDB automatically captures detailed traces of all agent interactions:

## Next Steps: Advanced OpenAI Agents SDK Integration

This guide covered the basics of integrating LangDB with OpenAI Agents SDK using a history and maths agent example. For more complex scenarios and advanced use cases, check out our comprehensive resources in [Guides Section](https://app.gitbook.com/s/jwXvc8GvLP6kUvOASaLP/).

# Working with LangGraph

Automatically instrument LangChain chains and agents with LangDB—gain live traces, cost analytics, and latency insights through init().

LangDB provides seamless tracing and observability for LangChain-based applications.

## Installation

Install the LangDB client with LangChain support:

```bash

pip install 'pylangdb[langchain]'

```

## Quick Start

### Export Environment Variables

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

export LANGDB_API_BASE_URL='https://api.us-east-1.langdb.ai'

```

### Initialize LangDB

Import and run the initialize **before** configuring your LangChain/LangGraph:

```python

from pylangdb.langchain import init

# Initialise LangDB

init()

```

### Define your Agent

```python

# Your existing LangChain code works with proper configuration

from langchain.chat_models import ChatOpenAI

from langchain.schema import HumanMessage

import os

api_base = "https://api.us-east-1.langdb.ai"

api_key = os.getenv("LANGDB_API_KEY")

project_id = os.getenv("LANGDB_PROJECT_ID")

# Default headers for API requests

default_headers: dict[str, str] = {

"x-project-id": project_id

}

# Initialize OpenAI LLM with LangDB configuratio

llm = ChatOpenAI(

model_name="gpt-4o",

temperature=0.3,

openai_api_base=api_base,

openai_api_key=api_key,

default_headers=default_headers,

)

result = llm.invoke([HumanMessage(content="Hello, LangDB!")])

```

Once LangDB is initialized, all calls to `llm`, intermediate steps, tool executions, and nested chains are automatically traced and linked under a single session.

## Complete LangGraph Agent Example

Here is a full LangGraph example based on ReAct Agent which uses LangDB Tracing.

### Example code

> Check out the full sample on GitHub:

### Setup Environment

Install the libraries using pip

```bash

pip install langgraph 'pylangdb[langchain]' langchain_openai geopy

```

### Export Environment Variables

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

export LANGDB_API_BASE_URL='https://api.us-east-1.langdb.ai'

```

### main.py

```python

# Initialize LangDB tracing

from pylangdb.langchain import init

init()

import os

from typing import Annotated, Sequence, TypedDict

from datetime import datetime

# Import required libraries

from langchain_core.messages import BaseMessage, HumanMessage, AIMessage, ToolMessage

from langchain_core.tools import tool

from langgraph.graph.message import add_messages

from langgraph.prebuilt import ToolNode

from langgraph.graph import StateGraph, END

from langchain_openai import ChatOpenAI

from geopy.geocoders import Nominatim

from pydantic import BaseModel, Field

import requests

# Initialize the model

def create_model():

"""Create and return the ChatOpenAI model."""

api_base = os.getenv("LANGDB_API_BASE_URL")

api_key = os.getenv("LANGDB_API_KEY")

project_id = os.getenv("LANGDB_PROJECT_ID")

default_headers = {

"x-project-id": project_id,

}

llm = ChatOpenAI(

model_name='openai/gpt-4o', # Choose any model from LangDB

temperature=0.3,

openai_api_base=api_base,

openai_api_key=api_key,

default_headers=default_headers

)

return llm

# Define the agent state

class AgentState(TypedDict):

"""The state of the agent."""

messages: Annotated[Sequence[BaseMessage], add_messages]

number_of_steps: int

# Define the weather tool

class SearchInput(BaseModel):

location: str = Field(description="The city and state, e.g., San Francisco")

date: str = Field(description="The forecasting date in format YYYY-MM-DD")

@tool("get_weather_forecast", args_schema=SearchInput, return_direct=True)

def get_weather_forecast(location: str, date: str) -> dict:

"""

Retrieves the weather using Open-Meteo API for a given location (city) and a date (yyyy-mm-dd).

Returns a dictionary with the time and temperature for each hour.

"""

geolocator = Nominatim(user_agent="weather-app")

location = geolocator.geocode(location)

if not location:

return {"error": "Location not found"}

try:

response = requests.get(

f"https://api.open-meteo.com/v1/forecast?"

f"latitude={location.latitude}&"

f"longitude={location.longitude}&"

"hourly=temperature_2m&"

f"start_date={date}&end_date={date}",

timeout=10

)

response.raise_for_status()

data = response.json()

return {

time: f"{temp}°C"

for time, temp in zip(

data["hourly"]["time"],

data["hourly"]["temperature_2m"]

)

}

except Exception as e:

return {"error": f"Failed to fetch weather data: {str(e)}"}

# Define the nodes

def call_model(state: AgentState) -> dict:

"""Call the model with the current state and return the response."""

model = create_model()

model.bind_tools([get_weather_forecast]

messages = state["messages"]

response = model.invoke(messages)

return {"messages": [response], "number_of_steps": state["number_of_steps"] + 1}

def route_to_tool(state: AgentState) -> str:

"""Determine the next step based on the model's response."""

messages = state["messages"]

last_message = messages[-1]

if hasattr(last_message, 'tool_calls') and last_message.tool_calls:

return "call_tool"

return END

# Create the graph

def create_agent():

"""Create and return the LangGraph agent."""

# Create the graph

workflow = StateGraph(AgentState)

workflow.add_node("call_model", call_model)

workflow.add_node("call_tool", ToolNode([get_weather_forecast]))

workflow.set_entry_point("call_model")

workflow.add_conditional_edges(

"call_model",

route_to_tool,

{

"call_tool": "call_tool",

END: END

}

)

workflow.add_edge("call_tool", "call_model")

return workflow.compile()

def main():

agent = create_agent()

query = f"What's the weather in Paris today? Today is {datetime.now().strftime('%Y-%m-%d')}."

initial_state = {

"messages": [HumanMessage(content=query)],

"number_of_steps": 0

}

print(f"Query: {query}")

print("\nRunning agent...\n")

for output in agent.stream(initial_state):

for key, value in output.items():

if key == "__end__":

continue

print(f"\n--- {key.upper()} ---")

if key == "messages":

for msg in value:

if hasattr(msg, 'content'):

print(f"{msg.type}: {msg.content}")

if hasattr(msg, 'tool_calls') and msg.tool_calls:

print(f"Tool Calls: {msg.tool_calls}")

else:

print(value)

if __name__ == "__main__":

main()

```

### Running your Agent

Navigate to the parent directory of your agent project and use one of the following commands:

```bash

python main.py

```

#### Output

{% code overflow="wrap" %}

```bash

--- CALL_MODEL ---

{'messages': [AIMessage(content="The weather in Paris on July 1, 2025, is as follows:\n\n- 00:00: 28.1°C\n- 01:00: 27.0°C\n- 02:00: 26.3°C\n- 03:00: 25.7°C\n- 04:00: 25.1°C\n- 05:00: 24.9°C\n- 06:00: 25.8°C\n- 07:00: 27.6°C\n- 08:00: 29.6°C\n- 09:00: 31.7°C\n- 10:00: 33.7°C\n- 11:00: 35.1°C\n- 12:00: 36.3°C\n- 13:00: 37.3°C\n- 14:00: 38.6°C\n- 15:00: 37.9°C\n- 16:00: 38.1°C\n- 17:00: 37.8°C\n- 18:00: 37.3°C\n- 19:00: 35.3°C\n- 20:00: 33.2°C\n- 21:00: 30.8°C\n- 22:00: 28.7°C\n- 23:00: 27.3°C\n\nIt looks like it's going to be a hot day in Paris!", additional_kwargs={'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 319, 'prompt_tokens': 585, 'total_tokens': 904, 'completion_tokens_details': None, 'prompt_tokens_details': None, 'cost': 0.005582999999999999}, 'model_name': 'gpt-4o', 'system_fingerprint': None, 'id': '3bbde343-79e3-4d8f-bd97-b07179ee92c0', 'service_tier': None, 'finish_reason': 'stop', 'logprobs': None}, id='run--4fd3896d-1fbd-4c91-9c21-bd6cf3d2949e-0', usage_metadata={'input_tokens': 585, 'output_tokens': 319, 'total_tokens': 904, 'input_token_details': {}, 'output_token_details': {}})], 'number_of_steps': 2}

```

{% endcode %}

### Traces on LangDB

When you run queries against your agent, LangDB automatically captures detailed traces of all agent interactions:

## Next Steps: Advanced LangGraph Integration

This guide covered the basics of integrating LangDB with LangGraph using a ReAcT agent example. For more complex scenarios and advanced use cases, check out our comprehensive resources in [Guides Section](https://app.gitbook.com/s/jwXvc8GvLP6kUvOASaLP/).

# Working with Agno

Unlock full observability for CrewAI agents and tasks—capture LLM calls, task execution, and agent interactions with LangDB’s init().

LangDB’s Agno integration provides end-to-end tracing for your Agno agent pipelines.

## Installation

Install the LangDB client with Agno feature flag:

```bash

pip install 'pylangdb[agno]'

```

## Quick Start

### Export Environment Variables

Set your LangDB credentials:

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

```

### Initialize Tracing

Import and run the initialize **before** configuring your Agno Code:

```python

from pylangdb.agno import init

# Initialise LangDB

init()

```

### Configure your Agno code

```python

import os

from pylangdb.agno import init

init()

from agno.agent import Agent

from agno.tools.duckduckgo import DuckDuckGoTools

from agno.models.langdb import LangDB

# Configure LangDB-backed model

langdb_model = LangDB(

id="openai/gpt-4",

api_key=os.getenv("LANGDB_API_KEY"),

project_id=os.getenv("LANGDB_PROJECT_ID"),

)

# Create and run your agent

agent = Agent(

name="Web Agent",

role="Search the web for information",

model=langdb_model,

tools=[DuckDuckGoTools()],

instructions="Answer questions using web search",

)

response = agent.run("What is LangDB?")

print(response)

```

All Agno interactions from invocation through tool calls to final output are traced with LangDB.

## Complete Agno Example

Here is a full example based on Web Search Agno Multi Agent Team.

### Example code

> Check out the full sample on GitHub:

### Setup Environment

```

pip install agno 'pylangdb[agno]' duckduckgo-search

```

### Export Environment Variables

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

```

### main.py

```python

import os

from textwrap import dedent

# Initialize LangDB tracing and import model

from pylangdb.agno import init

init()

from agno.models.langdb import LangDB

# Import Agno agent components

from agno.agent import Agent

from agno.tools.duckduckgo import DuckDuckGoTools

# Function to create a LangDB model with selectable model name

def create_langdb_model(model_name="openai/gpt-4.1"):

return LangDB(

id=model_name,

api_key=os.getenv("LANGDB_API_KEY"),

project_id=os.getenv("LANGDB_PROJECT_ID"),

)

web_agent = Agent(

name="Web Agent",

role="Search the web for comprehensive information and current data",

model=create_langdb_model("openai/gpt-4.1"),

tools=[DuckDuckGoTools()],

instructions="Always use web search tools to find current and accurate information. Search for multiple aspects of the topic to gather comprehensive data.",

show_tool_calls=True,

markdown=True,

)

writer_agent = Agent(

name="Writer Agent",

role="Write comprehensive article on the provided topic",

model=create_langdb_model("anthropic/claude-3.7-sonnet"),

instructions="Use outlines to write articles",

show_tool_calls=True,

markdown=True,

)

agent_team = Agent(

name="Research Team",

team=[web_agent, writer_agent],

model=create_langdb_model("gemini/gemini-2.0-flash"),

instructions=dedent("""\

You are the coordinator of a research team with two specialists:

1. Web Agent: Has DuckDuckGo search tools and must be used for ALL research tasks

2. Writer Agent: Specializes in creating comprehensive articles

WORKFLOW:

1. ALWAYS delegate research tasks to the Web Agent first

2. The Web Agent MUST use web search tools to gather current information

3. Then delegate writing tasks to the Writer Agent using the research findings

4. Ensure comprehensive coverage of the topic through multiple searches

IMPORTANT: Never attempt to answer without first having the Web Agent conduct searches.

"""),

show_tool_calls=True,

markdown=True,

)

agent_team.print_response(

"I need a comprehensive article about the Eiffel Tower. "

"Please have the Web Agent search for current information about its history, architectural significance, and cultural impact. "

"Then have the Writer Agent create a detailed article based on the research findings.",

stream=True

)

```

### Running your Agent

Navigate to the parent directory of your agent project and use one of the following commands:

```bash

python main.py

```

### Traces on LangDB

When you run queries against your agent, LangDB automatically captures detailed traces of all agent interactions:

## Next Steps: Advanced Agno Integration

This guide covered the basics of integrating LangDB with Agno using a Web Search agent example. For more complex scenarios and advanced use cases, check out our comprehensive resources in [Guides Section](https://app.gitbook.com/s/jwXvc8GvLP6kUvOASaLP/).

# Working with CrewAI

Add end-to-end tracing to Agno agent workflows with LangDB—monitor model calls, tool usage, and step flows using a single init() call.

LangDB makes it effortless to trace CrewAI workflows end-to-end. With a single `init()` call, all agent interactions, task executions, and LLM calls are captured.

## Installation

Install the LangDB client with LangChain feature flag:

```bash

pip install 'pylangdb[crewai]'

```

## Quick Start

### Export Environment Variables

Set your LangDB credentials:

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

```

### Initialize Tracing

Import and run the initialize **before** configuring your CrewAI Code:

```python

from pylangdb.crewai import init

# Initialise LangDB

init()

```

### Configure your CrewAI code

```python

import os

from dotenv import load_dotenv

from crewai import Agent, Task, Crew, LLM

# Configure LLM with LangDB headers

llm = LLM(

model="openai/gpt-4o", # Use LiteLLM Like Model Names

api_key=os.getenv("LANGDB_API_KEY"),

base_url=os.getenv("LANGDB_API_BASE_URL"),

extra_headers={"x-project-id": os.getenv("LANGDB_PROJECT_ID")}

)

# Define agents and tasks as usual

researcher = Agent(

role="researcher",

goal="Research topic thoroughly",

backstory="You are an expert researcher",

llm=llm,

verbose=True

)

task = Task(description="Research the given topic", agent=researcher)

crew = Crew(agents=[researcher], tasks=[task])

# Kick off the workflow

result = crew.kickoff()

print(result)

```

All CrewAI calls—agent initialization, task execution, and model responses—are automatically linked.

## Complete CrewAI example

Here is a full example based on CrewAI report writing agent.

### Example code

> Check out the full sample on GitHub:

### Setup Evironment

```bash

pip install crewai 'pylangdb[crewai]' crewai_tools setuptools python-dotenv

```

### Export Environment Variables

You also need to get API Key from [Serper.dev](https://serper.dev/)

```bash

export LANGDB_API_KEY=""

export LANGDB_PROJECT_ID=""

export LANGDB_API_BASE_URL='https://api.us-east-1.langdb.ai'

```

### main.py

```python

#!/usr/bin/env python3

import os

import sys

from pylangdb.crewai import init

init()

from dotenv import load_dotenv

from crewai import Agent, Task, Crew, Process, LLM

from crewai_tools import SerperDevTool

load_dotenv()

def create_llm(model):

return LLM(

model=model,

api_key=os.environ.get("LANGDB_API_KEY"),

base_url=os.environ.get("LANGDB_API_BASE_URL"),

extra_headers={"x-project-id": os.environ.get("LANGDB_PROJECT_ID")}

)

class ResearchPlanningCrew:

def researcher(self) -> Agent:

return Agent(

role="Research Specialist",

goal="Research topics thoroughly",

backstory="Expert researcher with skills in finding information",

tools=[SerperDevTool()],

llm=create_llm("openai/gpt-4o"),

verbose=True

)

def planner(self) -> Agent:

return Agent(

role="Strategic Planner",

goal="Create actionable plans based on research",

backstory="Strategic planner who breaks down complex challenges",

reasoning=True,

max_reasoning_attempts=3,

llm=create_llm("openai/anthropic/claude-3.7-sonnet"),

verbose=True

)

def research_task(self) -> Task:

return Task(

description="Research the topic thoroughly and compile information",

agent=self.researcher(),

expected_output="Comprehensive research report"

)

def planning_task(self) -> Task:

return Task(

description="Create a strategic plan based on research",

agent=self.planner(),

expected_output="Strategic execution plan with phases and goals",

context=[self.research_task()]

)

def crew(self) -> Crew:

return Crew(

agents=[self.researcher(), self.planner()],

tasks=[self.research_task(), self.planning_task()],

verbose=True,

process=Process.sequential

)

def main():

topic = sys.argv[1] if len(sys.argv) > 1 else "Artificial Intelligence in Healthcare"

crew_instance = ResearchPlanningCrew()

# Update task descriptions with topic

crew_instance.research_task().description = f"Research {topic} thoroughly and compile information"

crew_instance.planning_task().description = f"Create a strategic plan for {topic} based on research"

result = crew_instance.crew().kickoff()

print(result)

if __name__ == "__main__":

main()

```

### Running your Agent

Navigate to the parent directory of your agent project and use one of the following commands:

```bash

python main.py

```

### Traces on LangDB:

When you run queries against your agent, LangDB automatically captures detailed traces of all agent interactions:

## Next Steps: Advanced CrewAI Integration

This guide covered the basics of integrating LangDB with CrewAI using a Research and Planning agent example. For more complex scenarios and advanced use cases, check out our comprehensive resources in [Guides Section](https://app.gitbook.com/s/jwXvc8GvLP6kUvOASaLP/).

# Working with MCPs

Learn how to connect to MCP Servers using LangDB AI Gateway

Instantly connect to managed MCP servers — skip the setup and start using fully managed MCPs with built-in authentication, seamless scalability, and full tracing. This guide gives you a quick walkthrough of how to get started with MCPs.

## Quick Example

In this example, we’ll create a [**Virtual MCP Server**](https://docs.langdb.ai/docs/concepts/virtual-mcp-servers) by combining Slack and Gmail MCPs — and then connect it to an MCP Client like **Cursor** for instant access inside your chats.

### Steps:

1. **Select Slack and Gmail** from MCP Severs in the Virtual MCP Section.

2. **Generate** a Virtual MCP URL automatically.

3. **Install** the MCP into Cursor with a single command.

Example install command:

```bash

npx @langdb/mcp setup slack_gmail_virtual https://api.langdb.ai/mcp/xxxxx --client cursor

```

## What Happens Under the Hood?

* Authentication is handled (via OAuth or API Key)

* Full tracing and observability are available (inputs, outputs, errors, latencies)

* MCP tools are treated just like normal function calls inside LangDB

## Next Steps:

* MCP Servers listed on LangDB:

* Explore [MCP Usecases](https://app.gitbook.com/o/BdRlRaljD2qHr7uIBrM0/s/jwXvc8GvLP6kUvOASaLP/).

# Working with Headers

Explore how LangDB API headers like x-thread-id, x-run-id, x-label, and x-project-id improve LLM tracing, observability, and session tracking for better API management and debugging.

LangDB API provides robust support for **HTTP headers**, enabling developers to manage API requests efficiently with enhanced tracing, observability, and organization.

These headers play a crucial role in structuring interactions with multiple LLMs by providing **tracing, request tracking, and session continuity**, making it easier to monitor, and analyze API usage

## Thread ID (x-thread-id)

**Usage:** Groups multiple related requests under the same conversation

* Useful for tracking interactions over a single user session.

* Helps maintain context across multiple messages.

## Thread Title (x-thread-title)

**Usage:** Assigns a custom, human-readable title to a thread.

* This title is displayed in the LangDB UI, making it easier to identify and search for specific conversations.

## Public Thread (x-thread-public)

**Usage:** Makes a thread publicly accessible via a shareable link.

* Set the value to `1` or `true` to enable public sharing.

* The public URL will be: `https://app.langdb.ai/sharing/threads/{thread_id}`

* The `x-thread-title`, if set, will be displayed on the public thread page.

Check [Threads](https://docs.langdb.ai/docs/concepts/thread) for more details.

## Run ID (x-run-id)

**Usage:** Tracks a unique workflow execution in LangDB, such as a model call or tool invocation.

* Enables precise tracking and debugging.

* Each Run is independent for better observability.

Check [Run](https://docs.langdb.ai/docs/concepts/run) for more details.

## Label (x-label)

**Usage:** Adds a custom tag or label to a LLM Model Call for easier categorization.

* Helps with tracing multiple agents.

Check [Label](https://docs.langdb.ai/docs/concepts/label) for more details.

## Project ID (x-project-id)

**Usage:** Identifies the project under which the request is being made.

* Helps in cost tracking, monitoring, and organizing API calls within a specific project.

* Can be set in headers or directly in the API base URL `https://api.us-east-1.langdb.ai/${langdbProjectId}/v1`

# User Tracking

Track users in LangDB AI Gateway to analyze usage, optimize performance, and improve chatbot experiences.

LangDB AI enables user tracking to collect analytics and monitor usage patterns efficiently. By associating metadata with requests, developers can analyze interactions, optimize performance, and enhance user experience.

## Example: Chatbot Analytics with User Tracking

For a chatbot service handling multiple users, tracking enables:

* **Recognizing returning users**: Maintain conversation continuity.

* **Tracking usage trends**: Identify common queries to improve responses.

* **User segmentation**: Categorize users using tags (e.g., "websearch", "support").

* **Analytics**: Identify heavy users and allocate resources efficiently.

```python

curl 'https://api.us-east-1.langdb.ai/v1/chat/completions' \

-H 'authorization: Bearer LangDBApiKey' \

-H 'Content-Type: application/json' \

-d '{

"model": "openai/gpt-4o-mini",

"stream": true,

"messages": [

{

"role": "user",

"content": "Def bubbleSort()"

}

],

"extra": {

"user": {

"id": "7",

"name": "mrunmay",

"tags": ["coding", "software"]

}

}

}'

```

### User Tracking Fields

* **`extra.user.id`**: Unique user identifier.

* **`extra.user.name`**: User alias.

* **`extra.user.tags`**: Custom tags to classify users (e.g., "coding", "software").

## Fetching User Analytics & Usage Data

Once users are tracked, analytics and usage APIs can be used to retrieve insights based on `id`, `name`, or `tags`.

Checkout [Usage](https://docs.langdb.ai/docs/features/usage#usage-apis) and [Analytics](https://docs.langdb.ai/docs/features/analytics#analytics-apis) section for more details.

Example:

```bash

curl -L \

--request POST \

--url 'https://api.us-east-1.langdb.ai/analytics/summary' \

--header 'Authorization: Bearer langDBAPIKey' \

--header 'X-Project-Id: langDBProjectID' \

--header 'Content-Type: application/json' \

--data '{

"user_id": "7",

"user_name": "mrunmay",

"user_tags": ["software", "code"]

}'

```

Example response:

```json

{

"summary": [

{

"total_cost": 0.00030366,

"total_requests": 1,

"total_duration": 6240.888,

"avg_duration": 6240.9,

"duration": 6240.9,

"duration_p99": 6240.9,

"duration_p95": 6240.9,

"duration_p90": 6240.9,

"duration_p50": 6240.9,

"total_input_tokens": 1139,

"total_output_tokens": 137,

"avg_ttft": 6240.9,

"ttft": 6240.9,

"ttft_p99": 6240.9,

"ttft_p95": 6240.9,

"ttft_p90": 6240.9,

"ttft_p50": 6240.9,

"tps": 204.46,

"tps_p99": 204.46,

"tps_p95": 204.46,

"tps_p90": 204.46,

"tps_p50": 204.46,

"tpot": 0.05,

"tpot_p99": 0.05,

"tpot_p95": 0.05,

"tpot_p90": 0.05,

"tpot_p50": 0.05,

"error_rate": 0.0,

"error_request_count": 0

}

],

"start_time_us": 1737547895565066,

"end_time_us": 1740139895565066

}

```

# Using Parameters

Configure temperature, max\_tokens, logit\_bias, and more with LangDB AI Gateway. Test easily via API, UI, or Playground.

LangDB AI Gateway supports every LLM parameter like temperature, max\_tokens, stop sequences, logit\_bias, and more.

## API Usage:

{% tabs %}

{% tab title="Python" %}

```python

from openai import OpenAI

response = client.chat.completions.create(

model="gpt-4o", # Change Model

messages=[

{"role": "user", "content": "What are the earnings of Apple in 2022?"},

],

temperature=0.7, # temperature parameter

max_tokens=150, # max_tokens parameter

stream=True # stream parameter

)

```

{% endtab %}

{% tab title="TypeScript" %}

```typescript

const response = await client.chat.completions.create({

model: 'gpt-4o-mini',

messages,

temperature: 0.7, // temperature parameter

max_tokens: 150, // max_tokens parameter

logit_bias: { '50256': -100 }, // logit_bias parameter

stream: true, // stream parameter

});

```

{% endtab %}

{% endtabs %}

## UI

You can also use the UI to test various parameters and getting code snippet

### Playground

Use the Playground to tweak parameters in real time via the [Virtual Model config](https://docs.langdb.ai/docs/concepts/virtual-models) and send test requests instantly.

### Samples

Explore ready-made code snippets complete with preconfigured parameters—copy, paste, and customize to fit your needs.

# Thread

Use LangDB Threads to group messages, maintain conversation context, and enable seamless multi-turn interactions.

A **Thread** is simply a grouping of **Message History** that maintains context in a conversation or workflow. Threads are useful for keeping track of past messages and ensuring continuity across multiple exchanges.

**Core Features:**

* **Contextual Continuity**: Ensures all related Runs are grouped for better observability.

* **Multi-Turn Support**: Simplifies managing interactions that require maintaining state across multiple Runs.

**Example:**

A user interacting with a chatbot over multiple turns (e.g., asking follow-up questions) generates several messages, but all are grouped under a single **Thread** to maintain continuity.

**Headers for Thread:**

* `x-thread-id`: Links all Runs in the same context or conversations.

* `x-thread-title`: Assigns a custom, human-readable title to the thread, making it easier to identify.

* `x-thread-public`: Makes the thread publicly accessible via a shareable link by setting its value to `1` or `true`.

# Trace

Track complete workflows with LangDB Traces. Get end-to-end visibility, multi-agent support, and error diagnosis.

A **Trace** represents the **complete lifecycle of a workflow**, spanning all components and systems involved.

**Core Features:**

* **End-to-End Visibility**: Tracks model calls, tools across the entire workflow.

* **Multi Agent Ready**: Perfect for workflows that involve multiple services, APIs, or tools.

* **Error Diagnosis**: Quickly identify bottlenecks, failures, or inefficiencies in complex workflows.

**Parent-Trace:**

For workflows with nested operations (e.g., a workflow that triggers multiple sub-workflows), LangDB introduces the concept of a **Parent-Trace**, which links the parent workflow to its dependent sub-workflows. This hierarchical structure ensures you can analyze workflows at both macro and micro levels.

**Headers for Trace:**

* `trace-id`: Tracks the parent workflow.

* `parent-trace-id`: Links sub-workflows to the main workflow for hierarchical tracing.

# Run

Track and monitor complete workflows with Runs in LangDB AI Gateway for better observability, debugging, and insights.

A **Run** represents a **single workflow or operation** executed within LangDB. This could be a model invocation, a tool call, or any other discrete task. Each Run is independent and can be tracked separately, making it easier to analyze and debug individual workflows.

Example of a Run:

**Core Features:**

* **Granular Tracking**: Analyze and optimize the performance and cost of individual Runs.

* **Independent Execution**: Each Run has a distinct lifecycle, enabling precise observability.

**Example:**

Generating a summary of a document, analyzing a dataset, or fetching information from an external API – each is a **Run**.

**Headers for Run:**

* `x-run-id`: Identifies a specific Run for tracking and debugging purposes.

# Label

Label LLM instances in LangDB AI Gateway for easy tracking, categorization, and improved observability.

Label in LangDB defines an LLM instance with a unique identifier for categorization and tracking.

### Core Features

* **Model Categorization**: Assign labels to LLM instances.

* **Observability**: Track models by label.

### Headers for Label:

* **x-label**: Defines a label for an LLM instance.

```json

{

"x-label" : "research-agent"

}

```

# Message

A Message in LangDB AI Gateway defines structured interactions between users, systems, and models in workflows.

A **Message** is the **basic unit of communication** in LangDB workflows. Messages define the interaction between the user, the system, and the model. Every workflow is built around exchanging and processing messages.

**Core Features:**

* **Structured Interactions**: Messages define roles (`user`, `system`, `assistant`) to organize interactions clearly.

* **Multi-Role Flexibility**: Different roles (e.g., `system` for instructions, `user` for queries) enable complex workflows.

* **Dynamic Responses**: Messages form the backbone of LangDB’s chat-based interactions.

**Example:**

A simple interaction to generate a poem might look like this:

```json

[

{ "role": "system", "content": "You are a helful assistant" },

{ "role": "user", "content": "Write me a poem about celluloids." }

]

```

# Virtual Models

Create, save, and reuse LLM configurations with Virtual Models in LangDB AI Gateway to streamline workflows and ensure consistent behavior.

LangDB’s **Virtual Models** let you save, share, and reuse model configurations—combining prompts, parameters, tools, and routing logic into a single named unit. This simplifies workflows and ensures consistent behavior across your apps, agents, and API calls.

Once saved, these configurations can be quickly accessed and reused across multiple applications.

## Why do you need Virtual Models

Virtual models in LangDB are more than just model aliases. They are **fully configurable AI agents** that:

* Let you define **system/user messages** upfront

* Support **routing logic** to dynamically choose between models

* Include **MCP integrations** and **guardrails**

* Are callable from **UI playground**, **API**, and **LangChain/OpenAI SDKs**

Use virtual models to manage:

* Prompt versioning and reuse

* Consistent testing across different models

* Precision tuning with per-model parameters

* Seamless integration of tools and control logic

* Routing using strategies like fallback, percentage-based, latency-based, optimized, and script-based selection

## Setting Up Virtual Model

{% embed url="" %}

1. Go to the **Models**

2. Click on **Create Virtual Model.**

3. **Set prompt messages** — define system and user messages to guide model behavior

4. **Set variables** (optional) — useful if your prompts require dynamic values

5. **Select router type**

* `None`: Use a single model only

* `Fallback`, `Random`, `Cost`,`Percentage`, `Latency`, `Optimized`: Configure smart routing across targets. Checkout all [Routing Strategies](https://docs.langdb.ai/docs/features/routing).

6. **Add one or more targets**

* Each target defines a model, mcp servers, guardrails, system-user messages, response format and its parameters (e.g. temperature, max\_tokens, top\_p, penalties)

7. **Select MCP Servers** — connect tools like LangDB Search, Code Execution, or others

8. **Add guardrails** (optional) — for validation, transformation, or filtering logic

9. **Set response format** — choose between `text`, `json_object`, or `json_schema`

10. Give your virtual model a name and Save.

Your virtual model now appears in the **Models** section of your project, ready to be used anywhere a model is accepted.

## Updating and Versioning

You can edit virtual models anytime. LangDB supports formal versioning via the `@version` syntax:

* `langdb/my-model@latest` or `langdb/my-model` → resolves to the latest version

* `langdb/my-model@v1` or `langdb/my-model@1` → resolves to version 1

This allows you to safely test new versions, roll back to older ones, or maintain multiple stable variants of a model in parallel.

## Using Your Virtual Model

Once saved, your virtual model is fully available across all LangDB interfaces:

* **Chat Playground**: Select it from the model dropdown and test interactively.

* **OpenAI-Compatible SDKs**: Works seamlessly with OpenAI clients by changing only the `model` name.

* **LangChain / CrewAI / other frameworks**: Call it just like any base model by using `model="langdb/my-model@latest"` or a specific version like `@v1`.

This makes virtual models a portable, modular building block across all parts of your AI stack.

# Routing with Virtual Model

Manage routing strategies easily in LangDB AI Gateway’s UI to boost efficiency, speed, and reliability in AI workflows.

In LangDB AI Gatewau, any virtual model can act as a router. Just define a strategy and list of target models—it’ll route requests based on metrics like cost, latency, percentage, er or custom rules.

## Setting up Routing

{% embed url="" %}

Setting up routing in a virtual model is straightforward:

1. Open any virtual model in the Chat Playground and click **Show Config**

2. Choose a **routing strategy** (like `fallback`, `optimized`, `percentage`, etc.)

3. Add your **target models**—each one can be configured just like the virtual models you set up in the previous section

Each target defines:

* Which model to use

* Prompt

* MCP Servers

* Guardrails

* Response Format

* Custom parameters like temperature, max\_tokens, penalties, etc.

All routing options are available directly in the virtual model config panel.

Check more about the [Routing Strategies](https://docs.langdb.ai/docs/features/routing).

# Draft Mode

Simplify version control with LangDB Virtual Models’ draft mode—safely iterate, preview, and publish model versions without impacting live traffic.

LangDB’s Virtual Models support a **draft mode** that streamlines version management and ensures safe, iterative changes. In draft mode, modifications are isolated from the published version until you explicitly publish, giving you confidence that live traffic is unaffected by in-progress edits.

## Version Workflow

1. **Edit in Draft**

* Making any change (e.g., adjusting parameters, adding guardrails, modifying messages) flips the version into a **Modified** draft.

2. **Save Draft**

* Click **Save** to record your changes. The draft is saved as a new version at the top of the version list, without affecting the live version.

* Live API traffic remains pointed at the last published version.

3. **Publish Draft**

* Once validated, click **Publish**:

* Saves the version as the new latest version.

* Directs all live chat completion traffic to this version.

* Keeps the previous published version visible in the list so you can reselect and republish if needed.

4. **Restore & Edit Previous Version**

* Open the version dropdown and select any listed version.

* The selected version loads into the editor.

* You can further modify this draft and click **Save** to create a new version entry.

5. **Re-Publish Any Version**

* To make any saved version live, select it from the dropdown and click **Publish**.

## API Behavior

All `chatCompletions` requests to a Virtual Model endpoint automatically target the **latest published version**. Drafts and restored drafts never receive live traffic until published.

```python

from openai import OpenAI

client = OpenAI(

base_url="https://api.us-east-1.langdb.ai",

api_key=api_key,

)

# Always hits current published version

response = client.chat.completions.create(

model="openai/langdb/my-virtual-model@latest",

messages=[...],

)

```

To preview changes in a draft or restored draft, switch the UI or JSON view selector to that draft and experiment in the Virtual Model Editor — all without impacting production calls.

## Best Practices

* **Iterate Safely**: Leverage drafts for experimental guardrails or parameter tuning without risking production stability.

* **Frequent Publishing**: Keep version history granular—publish stable drafts regularly to simplify tracking and rollbacks.

* **Use Restore Thoughtfully**: Before restoring, ensure any important unsaved draft work is committed or intentionally discarded.

# Virtual MCP Servers

Create Virtual MCP Servers in LangDB AI Gateway to unify tools, manage auth securely, and maintain full observability across workflows

A **Virtual MCP Server** lets you create a customized set of MCP tools by combining functions from multiple MCP servers — all with scoped access, unified auth, and full observability.

{% embed url="" %}

Quick Guide on how to use virtual MCP Server

{% endembed %}

## Why Use a Virtual MCP?

* **Selective Tools**: Pick only the tools you need from existing MCP servers (e.g. Airtable's `list_records`, GitHub's `create_issue`, etc.)

* **Clean Auth Handling**: Add your API keys o\`nly if needed. Otherwise, LangDB handles OAuth for you.

* **Full Tracing**: Every call is traced on the LangDB — with logs, latencies, input/output, and error metrics.

* **Easy Integration**: Works out of the box with Cursor, Claude, Windsurf, and more.

* **Version Lock-in**: Virtual MCPs are pinned to a specific server version to avoid breaking changes.

* **Poisoning Safety**: Prevents injection or override by malicious tool definitions from source MCPs.

## How to Set It Up

1. Go to your Virtual MCP server on LangDB Project.

2. Select the tools you want to include.

3. (Optional) Add API keys or use LangDB-managed auth.

4. Click `Generate secure MCP URL`.

### Install in Cursor / Windsurf / Claude

Once you have the MCP URL:

{% code overflow="wrap" %}

```bash

npx @langdb/mcp setup figma https://api.staging.langdb.ai/mcp/xxxxx --client cursor

```

{% endcode %}

You're now ready to use your selected tools directly inside the editor.

### Try it in the playground

You can also try the Virtual MCP servers by adding the server in the [virtual model ](https://docs.langdb.ai/docs/concepts/virtual-models)config.

# Custom MCP Servers

Learn how to connect your own custom MCP servers to LangDB AI Gateway.

While LangDB provides a rich library of pre-built MCP servers, you can also bring your own. By connecting a custom MCP server, you can leverage all the benefits of a [Virtual MCP Server](https://docs.langdb.ai/docs/concepts/virtual-mcp-servers), including:

* **Unified Interface**: Combine your custom tools with tools from other LangDB-managed servers.

* **Clean Auth Handling**: Let LangDB manage authentication, or provide your own API keys and headers.

* **Full Observability**: Get complete tracing for every call, with logs, latencies, and metrics.

* **Seamless Integration**: Works out-of-the-box with clients like Cursor, Claude, and Windsurf.

* **Enhanced Security**: Benefit from version pinning and protection against tool definition poisoning.

This guide explains how to connect your own custom MCP server, whether it uses an HTTP (REST API) or SSE (Server-Sent Events) transport.

## Connecting Your Custom Server

When creating a Virtual MCP Server, you can add your own server alongside the servers deployed and managed by LangDB.

### Steps to Configure a Custom Server

1. **Navigate to MCP Servers**: Go to the "MCP Servers" section in your LangDB project and click "Create Virtual MCP Server".

2. **Add a Custom Server**: In the "Server Configuration" section, click the "+ Add Server" button on the right and select "Custom" from the list.

3. **Configure Server Details**: A new "Custom Server" block will appear on the left. Fill in the following details:

* **Server Name**: Give your custom server a descriptive name.

* **Transport Type**: Choose either `HTTP (REST API)` or `SSE (Server-Sent Events)` from the dropdown.

* **HTTP/SSE URL**: Enter the endpoint URL for your custom MCP server. LangDB will attempt to connect to this URL to validate the server and fetch the available tools.

* **(Optional) HTTP Headers**: If your server requires specific HTTP headers for authentication or other purposes, you can add them here.

* **(Optional) Environment Variables**: If your server requires specific configuration via environment variables, you can add them.

4. **Select Tools**: Once LangDB successfully connects to your server, it will display a list of all the tools exposed by your MCP server. You can select which tools you want to include in your Virtual MCP Server.

5. **Generate URL**: After configuring your custom server and selecting the tools, you can generate the secure URL for your Virtual MCP Server and start using it in your applications.

# Tracing

Track every model call, agent handoff, and tool execution for faster debugging and optimization.

LangDB Gateway provides detailed tracing to monitor, debug, and optimize LLM workflows.

Below is an example of a trace visualization from the dashboard, showcasing a detailed breakdown of the request stages:

In this example trace you’ll find:

* **Overview Metrics**

* **Cost**: Total spend for this request (e.g. $0.034).

* **Tokens**: Input (5,774) vs. output (1,395).

* **Duration**: Total end-to-end latency (29.52 s).

* **Timeline Breakdown**\

A parallel-track timeline showing each step—from moderation and relevance scoring to model inference and final reply.

* **Model Invocations**\*\*\

Every call to `gpt-4o-mini`, `gpt-4o`, etc., is plotted with precise start times and durations.

* **Agent Hand-offs**\

Transitions between your agents (e.g. search → booking → reply) are highlighted with custom labels like `transfer_to_reply_agent`.

* **Tool Integrations**\

External tools (e.g. `booking_tool`, `travel_tool`, `python_repl_tool`) appear inline with their execution times—so you can spot slow or failed runs immediately.

* **Guardrails**\

Rules like **Min Word Count** and **Travel Relevance** enforce domain-specific constraints and appear in the trace.

With this level of visibility you can quickly pinpoint bottlenecks, understand cost drivers, and ensure your multi-agent pipelines run smoothly.

# Auto Router

Stop guessing which model to pick. The Auto Router picks the best one for you—whether you care about cost, speed, or accuracy.

Stop guessing which model to pick. The Auto Router picks the best one for you—whether you care about cost, speed, or accuracy.

## Why Use Auto Router?

* **Save Costs** - Automatically uses cheaper models for simple queries

* **Get Faster Responses** - Routes to the fastest model when speed matters

* **Guarantee Accuracy** - Picks the best model for critical tasks

* **Handle Scale** - No configuration hell, just works

### Quick Start

#### Using API

```json

{

"model": "router/auto",

"messages": [

{

"role": "user",

"content": "What's the capital of France?"

}

]

}

```

#### Using UI

You can also try Auto Router through the LangDB dashboard:

LangDB dashboard showing available Auto Router models and configuration options

> **Note:** The UI shows only a few router variations. For all available options and advanced configurations, use the API.

### Trace Example

Here's what happens behind the scenes when you use Auto Router:

That's it — no config needed. The router classifies the query and picks the best model automatically.

> If you already know the query type (e.g., Finance), skip auto-classification with `router/finance:accuracy`.

## Under the Hood

Behind the scenes, the Auto Router uses lightweight classifiers (NVIDIA for complexity, BART for topic) combined with LangDB's routing engine. These decisions are logged in traces so you can inspect why a query was sent to a specific model.

### How It Works

The Auto Router uses a two-stage classification process:

1. **Complexity Classification**: Uses NVIDIA's classification model to determine if a query is high or low complexity

2. **Topic Classification**: Uses Facebook's BART Large model to identify the query's topic from these categories:

* Academia

* Finance

* Marketing

* Maths

* Programming

* Science

* Vision

* Writing

Based on these classifications and your chosen optimization strategy, the router automatically selects the best model from your available options.

### Router Behavior

| Router Syntax | What happens |

| ----------------------- | ------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| `router/auto` | Classifies complexity + topic. Low-complexity queries go to cheaper models; high-complexity queries go to stronger models. Then applies your optimization strategy. |

| `router/auto:` | Classifies topic only. Ignores complexity and always applies the chosen optimization (cost, accuracy, etc.) for that topic. |

| `router/:` | Skips classification. Directly routes to the specified topic with the chosen optimization mode. |

## Optimization Modes

| Mode | What it does | Best for |

| ------------ | ------------------------------------------------------------------------ | ------------------------- |

| `balanced` | Intelligently distributes requests across models for optimal performance | General apps (default) |

| `accuracy` | Picks models with best benchmark scores | Research, compliance |

| `cost` | Routes to cheapest viable model | Support chatbots, FAQs |

| `latency` | Always picks the fastest | Real-time UIs, voice bots |

| `throughput` | Distributes across many models | High-volume pipelines |

## Case Study

Beating the Best Model

Save costs without losing quality. Auto Router delivers best-model accuracy at a fraction of the price.

## Use Cases

### Cost Optimization

Perfect for FAQ bots, education apps, and high-volume content generation.

```json

{

"model": "router/auto:cost",

"messages": [

{

"role": "user",

"content": "What are your business hours?"

}

]

}

```

### Accuracy Optimization

Ideal for finance, medical, legal, and research applications.

```json

{

"model": "router/auto:accuracy",

"messages": [

{

"role": "user",

"content": "Analyze this financial risk assessment"

}

]

}

```

### Latency Optimization

Great for real-time assistants, voice bots, and interactive UIs.

```json

{

"model": "router/auto:latency",

"messages": [

{

"role": "user",

"content": "What's the weather like today?"

}

]

}

```

### Balanced (Load Balanced)

Intelligently distributes requests across available models for optimal performance. Works well for most business applications and integrations.

```json

{

"model": "router/auto",

"messages": [

{

"role": "user",

"content": "Help me write a product description"

}

]

}

```

## Direct Category Routing

If you already know your query belongs to a specific domain, you can skip classification and directly route to a topic with your chosen optimization mode.

```json

{

"model": "router/finance:accuracy",

"messages": [

{

"role": "user",

"content": "Analyze the risk factors in this financial derivative"

}

]

}

```

**Result:**

* Skips complexity + topic classification

* Directly applies accuracy optimization for the finance topic

* Routes to the highest-scoring finance-optimized model

**Available topic shortcuts:**

* `router/finance:`

* `router/writing:`

* `router/academia:`

* `router/programming:`

* `router/science:`

* `router/vision:`

* `router/marketing:`

* `router/maths:`

Where `` can be: `balanced`, `accuracy`, `cost`, `latency`, or `throughput`.

**Quick Decision Guide:**

* **Don't know the type?** → Use `router/auto`

* **Know the type?** → Jump straight with `router/:`

## Advanced Configuration

### Topic-Specific Routing

```json

{

"model": "router/auto",

"router": {

"topic_routing": {

"finance": "cost",

"writing": "latency",

"technical": "accuracy"

}

},

"messages": [

{

"role": "user",

"content": "Calculate the net present value of this investment"

}

]

}

```

## Best Practices

1. **Choose the Right Mode** - Match optimization to your use case

2. **Monitor Performance** - Use LangDB's analytics to track routing decisions

3. **Combine with Fallbacks** - Add fallback models for high availability

4. **Test Different Modes** - Experiment to find the best fit

## Integration with Other Features

The Auto Router works seamlessly with:

* **Guardrails** - Apply content filtering before routing

* **MCP Servers** - Access external tools and data sources

* **Response Caching** - Cache responses for frequently asked questions

* **Analytics** - Track routing decisions and performance metrics

# Beating the Best Model

Save costs without losing quality. Auto Router delivers best-model accuracy at a fraction of the price.

Most developers assume that using the *best* model is the safest bet for every query.\

But in practice, that often means **paying more than you need to** — especially when cheaper models can handle simpler queries just as well.

LangDB’s Auto Router shows you don’t always need the “best” model — just the *right* model for the job.

## The Question We Asked